Developed for automobiles, proven in medicine.

People have recorded the world visually since the beginning of time. The eye is a proven tool for precisely this purpose, perfectly adapted to recognize surroundings in an extremely wide range of lighting conditions. Yet the eye is ill-suited to measuring absolute brightness values, which for example, is a necessary feature for test strips in medical diagnostics. A variety of technologies have thus been developed to relieve humans of this task and to automate it.

Light-sensitive semiconductors form the basis for image sensors. They quickly and reliably measure brightness values in visual applications, thereby providing results that can be reproduced using image recognition algorithms. In addition to surveillance cameras, machine vision and gaming, other common application areas include the automotive industry and medical engineering. Imaging can be used to detect structures, capture traffic signs, other cars on the road, measure levels of concentration and identify bar codes. This automation not only minimizes the risk of human error, but also helps reduce workloads.

Requirements in Medicine and Diagnostics

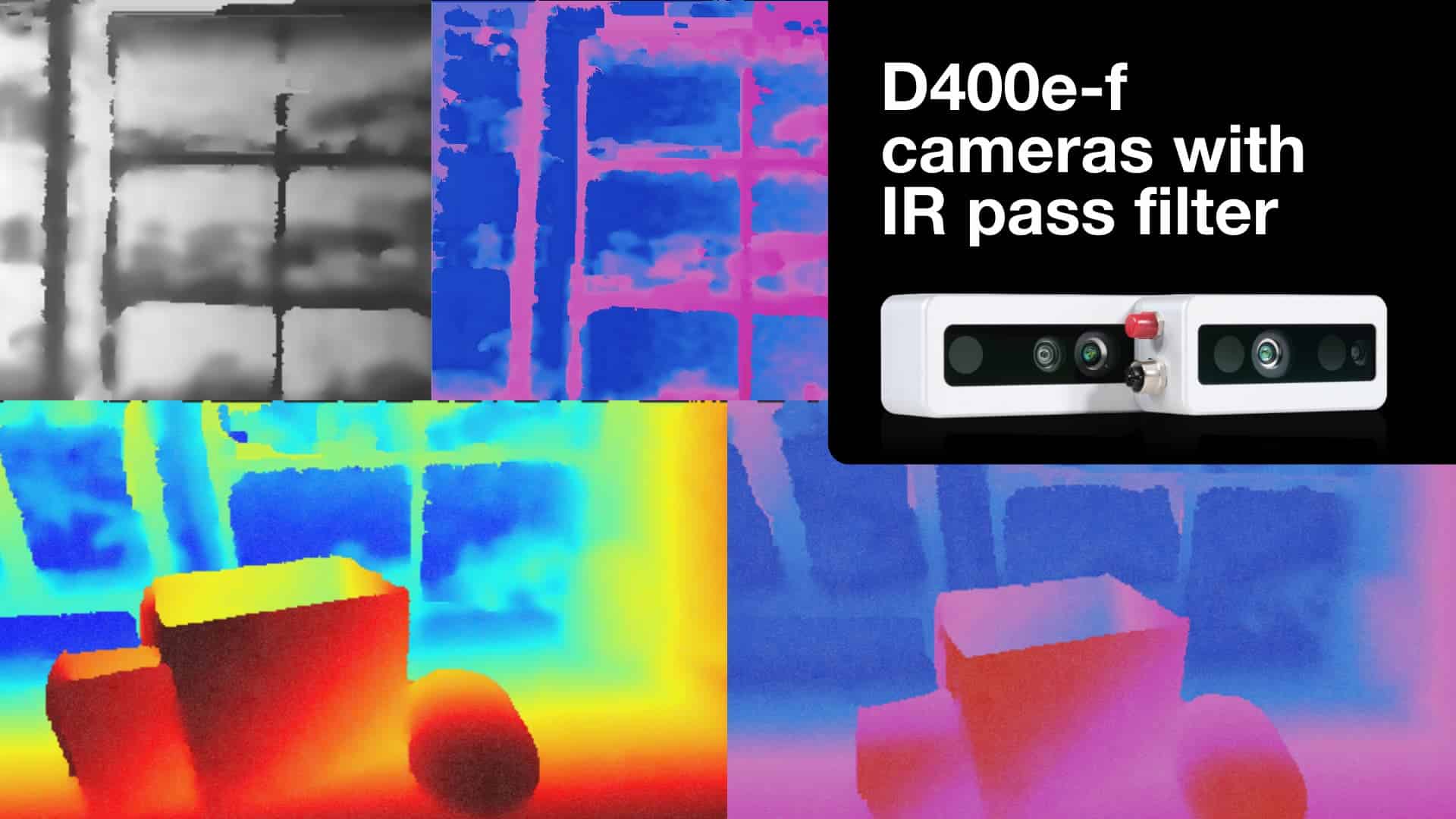

In the automotive industry, self-driving cars are expected to record their surroundings using optical sensors, among other things (Pic 2). Not only do these sensors have to be powerful, robust and durable, they also have to be available for many years in case repairs or software updates are necessary. Long-term availability and a long service life are important in the medical sector too. Here, every device is individually approved, and the approval expires as soon as even one component is changed. A typical development cycle lasts around five years, so every component needs to work reliably for many years and be available for as long as possible – at the very least until the successor model is approved. As in the automotive industry, many measurements in medicine are also done using optical imaging, for example a sample, a test strip transmission or a reflection under specific light conditions.

Avoid Errors – Save Material and Time

Photo sensor technology has other advantages beyond just taking measurements. It can also be used to avoid errors when processing a medical sample. It can clearly identify samples marked with a QR code or a bar code, ensuring consistent and correct patient allocation. To a certain degree it can even compensate for user errors. For example, If a test strip is not fully wetted with the liquid to be tested, this can be adjusted. If the wetted part is big enough, only information from the “acceptable” portion of the image is used. If the surface area is insufficient for a meaningful measurement, an error message is sent instead of an invalid result. Image recognition thus saves time and sample materials, which directly benefits both the patient and the doctor. It replaces manual optical reading, which in turn makes the measurement easier to use, more precise and more reliable because it can now be reproduced regardless of the user’s disposition on the day or the light conditions at different times of the day (Pic 3). In the end, not only can the diagnosis be made quicker, but the treatment can start earlier and will be more precisely suited to a patient’s needs.

Which Sensor?

The two most commonly used technologies for image sensors are the CMOS (complementary metal-oxide semiconductor) and the CCD (charge-coupled device). They measure the light intensity with a high spatial and temporal resolution and thus enable a downstream image analysis system to perform the necessary pattern detection. For both sensor types, the incident light in photodiodes generates electricity appropriate to its brightness. In the individual pixels, this electricity charges a capacitor whose stored charge is represented in the image information. The CCD sensor reads out the data one line at a time. Conversely. The CMOS sensor can address each pixel directly and read out the individual pixels independently of one another, or read out the entire image all at once. It also offers the integrated function of an A/D converter, meaning digital values can be output directly. CMOS sensors tend to be better for challenging applications, because they offer more functions, a higher read-out speed and work more reliably at both high and low temperatures.

![]()

Photo Sensor Technology in Practice

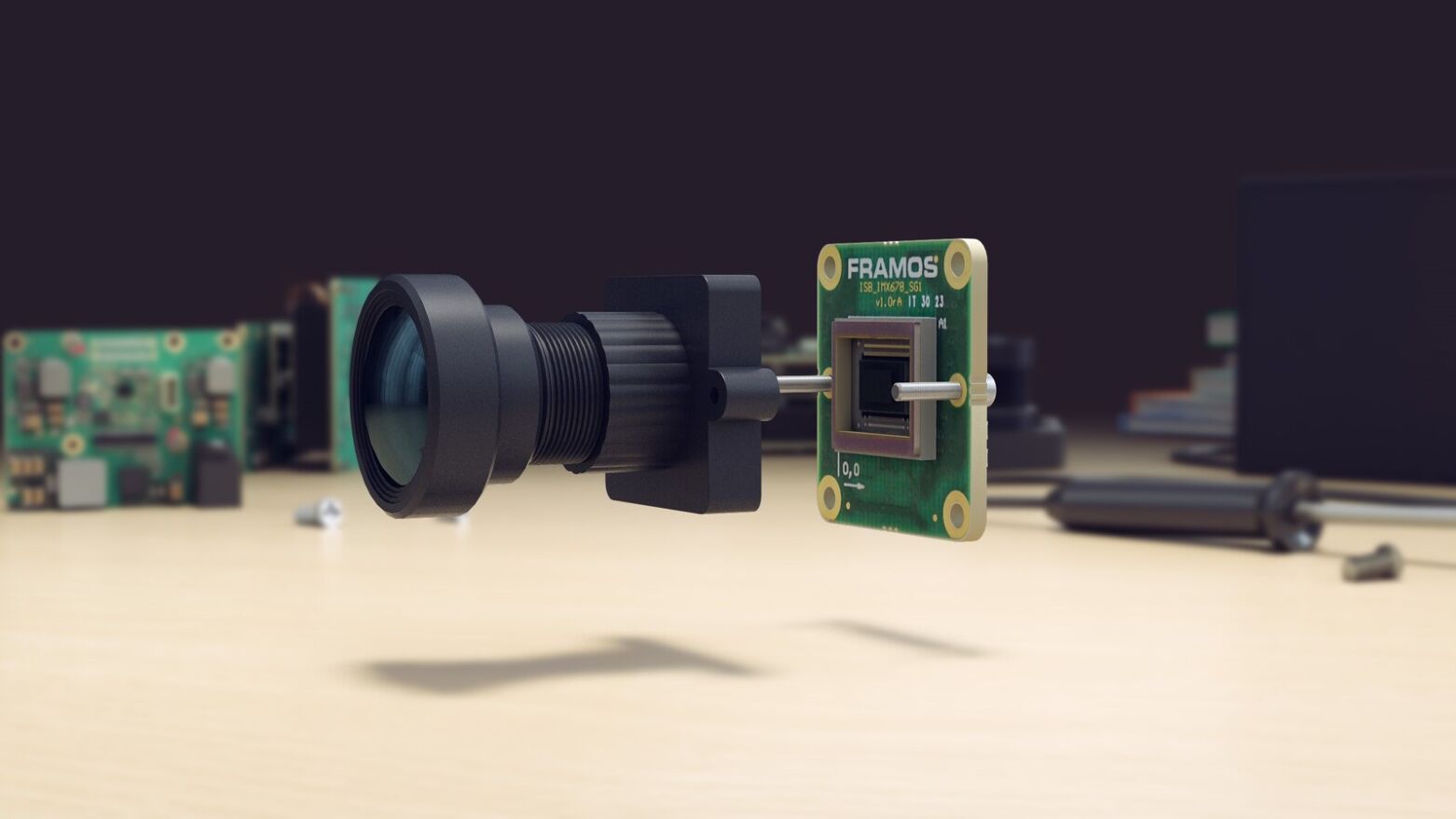

One good example of an image sensor that satisfies even medical engineering requirements is the MT9V024 ON Semiconductor, available from Framos. Originally it was developed for the automotive industry, but because the timescale for component availability is even greater than in the medical sector, the manufacturer guarantees that the sensors will be available for ten years. The 1/3” CMOS sensor can be used between -30°C and +70°C and can also detect near infrared with good sensitivity, which supplies more usable information for downstream image detection as well as allowing for “lighting” that is invisible to the human eye (Pic 3).

With its low power consumption of 0.3 W, it is also ideally suited for mobile devices that a doctor could use on site either in their practice or in a hospital. (Pic 4). The patient’s key health data can thus be determined in a short space of time and independent of a laboratory. Due to the sensor’s linearity (cf. technology box), all of the measured values recorded by identical devices can be compared with one another. The automation of measuring devices reduces the total cost of performing key medical readings, while the long availability guarantees that a defective device can be repaired or replaced as quickly as possible. Thanks to photo sensor technology, patients can now be diagnosed in a partially automated manner with greater accuracy and reliability. Treatment can start sooner and has a greater chance of success. And because every second counts when a diagnosis requires an immediate response, image sensors can even save lives.

Deep Dive into Technology: Linearity and High Dynamic Range

To measure absolute brightness values with a photo sensor, we must know the relationship between the amount of incident light and the signal strength. In the best-case scenario, both variables have a proportional relationship, i.e. if there is double the amount of incident light, then double the voltage is provided. If the relationship is not proportional, it can still be usefully measured after calibration: The sensitivity curve created during calibration assigns the associated brightness to each voltage.

The ratio of the biggest to the smallest measurable brightness is the dynamic range. In very dynamic images – a candle in an otherwise unlit room, for instance – the photodiode reaches its limits. Either the details of the dark room are lost in the noise, or the details of the candle are lost in the overexposed white. This issue can be resolved with a deliberately non-linear sensitivity curve, which projects a greater brightness range (high-dynamic range, HDR) on the limited signal range. The MT9V034 image sensor manages this on-chip by splitting the exposure time into segments and controlling the pixels with a different voltage in each of these segments. Then, in the image, only the nonlinear mapping of the very dark and the very bright details can be recognized at the same time.